Apple, Google raise new concerns by yanking Russian app

BERKELEY, Calif. (AP) — Big Tech companies that operate around the globe have long promised to obey local laws and to protect civil rights while doing business. But when Apple and Google capitulated to Russian demands and removed a political-opposition app from their local app stores, it raised worries that two of the world’s most successful companies are more comfortable bowing to undemocratic edicts — and maintaining a steady flow of profits — than upholding the rights of their users.

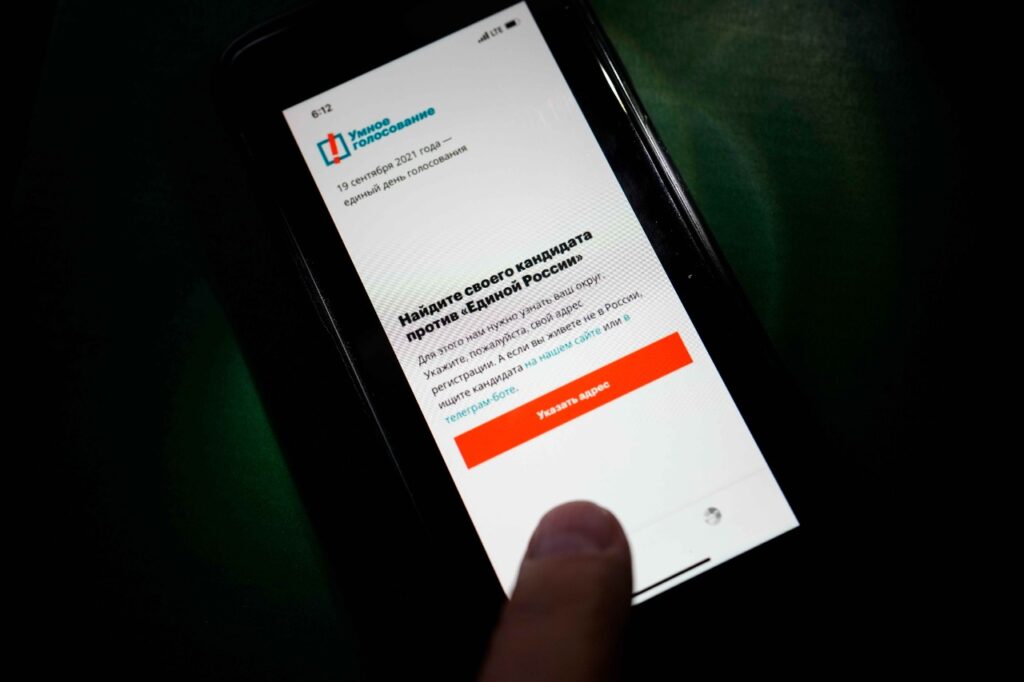

The app in question, called Smart Voting, was a tool for organizing opposition to Russia President Vladimir Putin ahead of elections held over the weekend. The ban levied last week by a pair of the world’s richest and most powerful companies galled supporters of free elections and free expression.

“This is bad news for democracy and dissent all over the world,” said Natalia Krapiva, tech legal counsel for Access Now, an internet freedom group. “We expect to see other dictators copying Russia’s tactics.”

Technology companies offering consumer services from search to social media to apps have long walked a tightrope in many of the less democratic nations of the world. As Apple, Google, and other major companies such as Amazon, Microsoft and Facebook have grown more powerful over the past decade, so have government ambitions to harness that power for their own ends.

“Now this is the poster child for political oppression,” said Sascha Meinrath, a Penn State University professor who studies online censorship issues. Google and Apple “have bolstered the probability of this happening again.”

Neither Apple nor Google responded to requests for comment from The Associated Press when the news of the app’s removal broke last week; both remained silent this week as well.

Google also denied access to two documents on its online service Google Docs that listed candidates endorsed by Smart Voting, and YouTube blocked similar videos.

According to a person with direct knowledge of the matter, Google faced legal demands by Russian regulators and threats of criminal prosecution of individual employees if it failed to comply. The same person said Russian police visited Google’s Moscow offices last week to enforce a court order to block the app. The person spoke to the AP on condition of anonymity because of the sensitivity of the issue.

Google’s own employees have reportedly blasted the company’s cave-in to Putin’s power play by posting internal messages and images deriding the app’s removal.

That sort of backlash within Google has become more commonplace in recent years as the company’s ambitions appeared to conflict with its one-time corporate motto, “Don’t Be Evil,” adopted by cofounders Larry Page and Sergey Brin 23 years ago. Neither Page nor Brin — whose family fled the former Soviet Union for the U.S. when he was a boy — are currently involved in Google’s day-to-day management, and that motto has long since been set aside.

Apple, meanwhile, lays out a lofty “Commitment to Human Rights” on its website, although a close read of that statement suggests that when legal government orders and human rights are at odds, the company will obey the government. “Where national law and international human rights standards differ, we follow the higher standard,” it reads. “Where they are in conflict, we respect national law while seeking to respect the principles of internationally recognized human rights.”

A recent report from the Washington nonprofit Freedom House found that global internet freedom declined for the 11th consecutive year and is under “unprecedented strain” as more nations arrested internet users for “nonviolent political, social, or religious speech” than ever before. Officials suspended internet access in at least 20 countries, and 21 states blocked access to social media platforms, according to the report.

For the seventh year in a row, China held the top spot as the worst environment for internet freedom. But such threats take several forms. Turkey’s new social media regulations, for instance, require platforms with over a million daily users to remove content deemed “offensive” within 48 hours of being notified, or risk escalating penalties including fines, advertising bans and limits on bandwidth.

Russia, meanwhile, added to the existing “labyrinth of regulations that international tech companies must navigate in the country,” according to Freedom House. Overall online freedom in the U.S. also declined for the fifth consecutive year; the group said, citing conspiracy theories and misinformation about the 2020 elections as well as surveillance, harassment, and arrests in response to racial-injustice protests.

Big Tech companies have generally agreed to abide by country-specific rules for content takedowns and other issues in order to operate in these countries. That can range from blocking posts about Holocaust denial in Germany and elsewhere in Europe where they’re illegal to outright censorship of opposition parties, as in Russia.

The app’s expulsion was widely denounced by opposition politicians. Leonid Volkov, a top strategist to jailed opposition leader Alexei Navalny, wrote on Facebook that the companies “bent to the Kremlin’s blackmail.”

Navalny’s ally Ivan Zhdanov said on Twitter that the politician’s team is considering suing the two companies. He also mocked the move: “Expectations: the government turns off the internet. Reality: the internet, in fear, turns itself off.”

It’s possible that the blowback could prompt either or both companies to reconsider their commitment to operating in Russia. Google made a similar decision in 2010 when it pulled its search engine out of mainland China after the Communist government there began censoring search results and videos on YouTube.

Russia isn’t a major market for either Apple, whose annual revenue this year is expected to approach $370 billion, or Google’s corporate parent, Alphabet, whose revenue is projected to hit $250 billion this year. But profits are profits.

“If you want to take a principled stand on human rights and freedom of expression, then there are some hard choices you have to make on when you should leave the market,” said Kurt Opsahl, general counsel for the digital rights group Electronic Frontier Foundation.

—-

Ortutay reported from Oakland, California. Associated Press writers Daria Litvinova in Moscow and Kelvin Chan in London contributed to this story.